In music, orchestration is the process that ensures a large ensemble of individual instruments play their parts on time and in tempo. In development, orchestration keeps software processes, workflows, systems and services running at the right time and in the right order.

While musical orchestras have been around for about 500 years, software orchestration emerged recently, to meet the demand for high-quality, scalable and performant applications. In development, orchestration automates a process or workflow across multiple environments, and it coordinates and manages a complex web of systems and services.

Now, orchestration has further expanded to manage actual application tasks, such as creating ML-powered products. That means it’s more important than ever to level the barriers between building, deploying and orchestrating services so orchestration can handle the heavy lifting (running an application on a schedule, assigning resources on demand, rolling back a project to a different version, handling multi-cloud environments, spinning up containers on demand and more).

An orchestrator coordinates larger processes or workflows that include multiple tasks. Automation is a subset of orchestration because orchestration chains together automated tasks as an end-to-end process.

Orchestration supports several fields: cloud, security, containers and data. This has given rise to an assortment of orchestration tools. On one hand, data/ML orchestrators like Flyte, Dagster, Kubeflow Pipelines, Prefect are used to construct data pipelines; on the other, container orchestrators like HashiCorp Nomad, Rancher simplify orchestration at scale. Meanwhile, Apache Airflow is a popular way to schedule data pipelines.

This article provides a high-level overview of the history of orchestration and dives into two specific categories — data and ML orchestration. You’ll gain an understanding of what each orchestration technique encompasses and what works best in your case.

History of Orchestration

The proliferation and growth of orchestration were catalyzed by three specific occurrences. In 1987, Vixie created cron as a tool to perform automated scheduling. Next, developers released graphical ETL tools (like Informatica PowerCenter and Talend) that were built entirely on cron's scheduling mechanism.

And in the past 10 years, a whole host of new tools have been created for data pipelines, like Apache Airflow, Luigi and Oozie. These tools simplify the way different tools talk to each other. While many people still use these relatively simple tools, more-sophisticated offerings — like Prefect, Dagster and Flyte — enhance the UX, improve the scalability of workflows, support greater dynamism, and keep the orchestration ecosystem on par with the growing challenges.

In the next section, let’s look into two specific kinds of orchestrators: data and ML.

Data Orchestration

Data orchestration is the process of gathering, cleansing, organizing and analyzing data to make informed decisions. It can be done manually or with the help of orchestrators that automate much of the process.

Data orchestration can involve several steps that prepare data to be consumed further down the pipeline.

Data integration combines multiple data sets into one, pulling data from multiple sources into a single view and eliminating data silos. Airbyte is among the popular tools developed to automate this process.

Data quality improvement identifies and corrects errors in data. Tools like Great Expectations, Pandera and Pydantic help ensure data quality; each can be integrated into your orchestration pipelines.

Data enrichment adds more information to a dataset by connecting it with other datasets or by creating new fields. Enrichment can make datasets useful and powerful, or more representative of the population it is trying to describe.

Data cleansing ensures data is accurate, complete and consistent. It checks that there are no duplicates or bad records in the dataset and that all the records in the dataset are valid and error-free. Data cleansing is highly dependent on the dataset procured. It typically involves ensuring the data is all decked up to proceed further along the pipeline. Making data cleansing part of the orchestration pipeline could mean data is cleansed automatically as soon as it arrives.

Taken together, data orchestration ensures that each phase runs in a specified order and is fault tolerant, and that the pipeline runs smoothly and on a regular cadence if necessary. Some features of a data orchestrator include:

- Scheduling: Data pipelines need to run regularly to ensure the data doesn’t go stale

- Versioning: Often we switch among various versions of code, sometimes to roll back to a working version

- DAGs: Directed acyclic graphs (DAGs) help portray a clear picture of the data dependencies

- Parameterization: We may want to use reuse the same processing logic given different inputs

- Data lineage: Understanding and visualizing data as it flows is a crucial component of a data orchestrator

Data orchestrators like Flyte, Prefect and Dagster provide the tooling to implement data orchestration; they enable the implementation of orchestration mechanisms on top of seamless integrations with data tools to automate every phase of the process. Databricks, a popular data-processing tool, can be used in conjunction with the primary orchestrator tools to build powerful data pipelines.

ML Orchestration

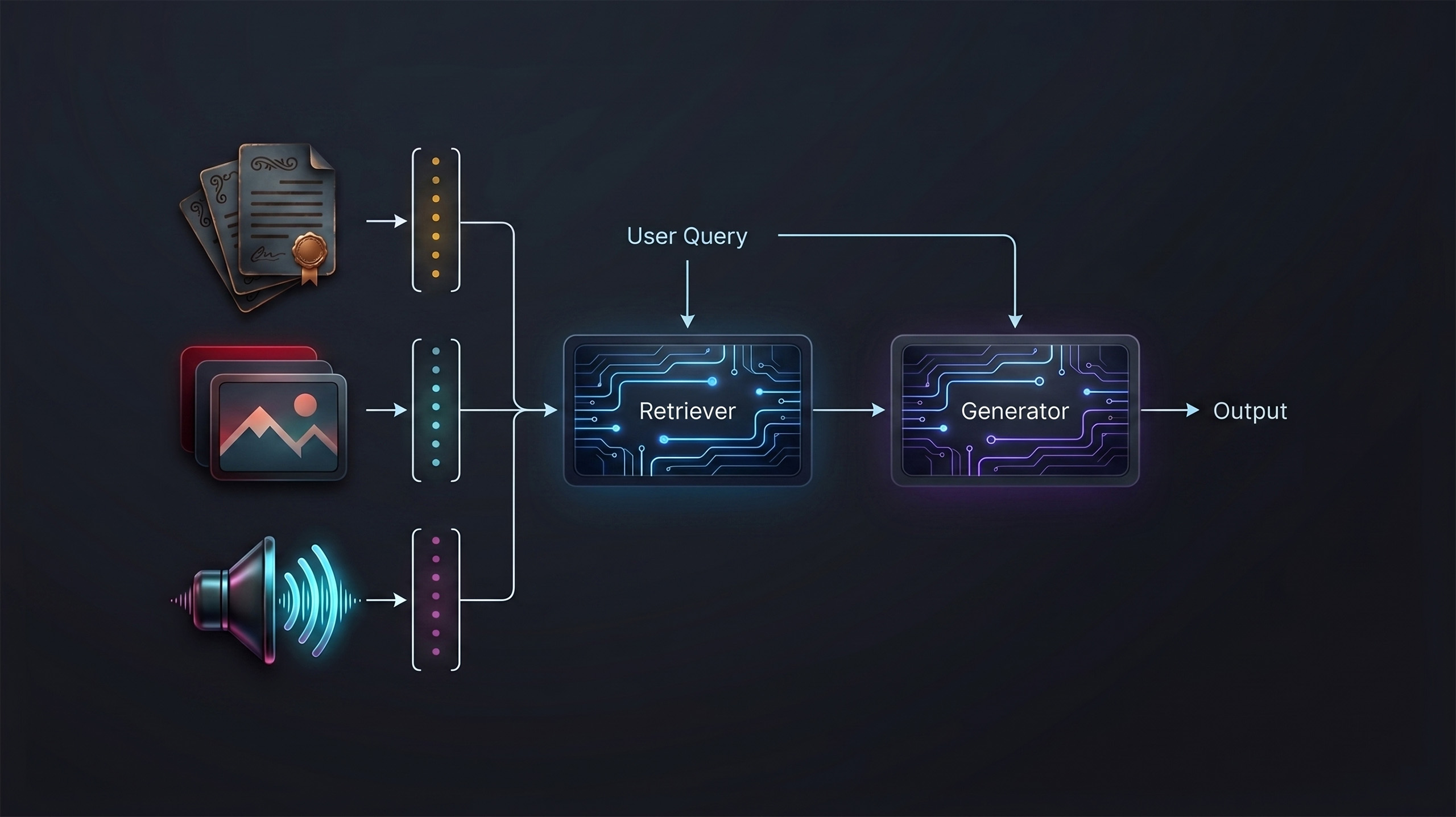

Machine learning orchestration (MLO) differs from data orchestration in that ML orchestration can handle scale with ease. Because they’re designed to support ML, ML orchestrators manage to compute resources much more easily than data orchestrators do.

ML orchestrators have also been developed to handle the demand for dynamism in ML development. MLO enables effective implementation, management and deployment of production-grade ML pipelines to simplify the creation of building ML-powered products.

An ML orchestrator also does more than a data orchestrator. For example, ML orchestration can include supporting a model registry, extensive scalability, dynamism, model observability and more.

Implementing an ML orchestrator simplifies iteration through ML development cycles. An ML development cycle starts with creating features, setting up the training process, evaluating the model, saving the best model and monitoring the model. Let’s take a look at each of these components more closely.

Data featurization turns raw data into features that can train ML algorithms. Raw data is rarely in a format that is consumable by an ML model. Turning it into consumable features is called feature engineering. To simplify feature handoff within an ML team, some teams use feature stores, which store and serve features across offline training and online inference. Tecton, Feast, and Hopsworks are some prominent feature stores that orchestrate the feature-engineering pipeline.

Model training trains an ML model on features. The training process requires significant computing power and can be performed any number of times to arrive at a good model. Frameworks like PyTorch, TensorFlow, and JAX are helpful to train models.

Model evaluation tests and evaluates the performance of an ML model. It helps determine whether the model is ready to be deployed or served in production. Techniques within the ML frameworks can evaluate models, using tools like Weights & Biases and MLFlow to log metrics and visualize the experiments.

Model deployment puts the model into production. Deployment can happen on a regular cadence to keep up with an updated or re-trained model. Deployment could mean several things, including dependency management, performance optimization and horizontal scaling. Every aspect of model deployment is associated with a range of tools. For example, dependency management can be achieved using Docker, or using sophisticated tools like BentoML which also handles serving the models. Performance optimization means optimizing the model and deployment. ML frameworks can be used in this case, along with other offerings like Ray Serve and Triton.

Model monitoring captures performance degradation, health, and data and concept drift over time. The goal of model monitoring is to identify the right time to retrain or update a model. Tools like Grafana, Prometheus, and Arize are helpful to monitor ML models.

ML orchestration means orchestrating all these phases in the ML deployment pipeline end to end. Besides the tools that implement each phase, it’s beneficial to have an orchestrator that controls all the pipeline components. Key features include:

- Scheduling: ML models may need to be scheduled to run regularly to make sure it doesn’t go stale, especially in the case of online learning

- Versioning: It’s crucial to ensure that ML models and data are versioned. This avoids wasting resources if training needs to happen again and the previous versions aren’t available

- Resource management: ML is compute- and resource-intensive. Resources and infrastructure need to be controlled to ensure scalability of the ML pipelines

- Dynamic workflows: ML pipelines are highly dynamic. The ability to construct dynamic workflows simplifies the model experimentation

- Caching: Since ML is compute-intensive, caching avoids re-running the executions when the input remains the same

ML orchestrators like Flyte, Kubeflow Pipelines and ZenML can be used to orchestrate ML pipelines seamlessly.

Infrastructure Orchestration

A third vertical is infrastructure orchestration. Infrastructure orchestration automates the provisioning and management of infrastructure services. This is essential to orchestrating ML pipelines because ML is compute-intensive. Data pipelines may need an infrastructure orchestrator as well, depending on their complexity. Infrastructure orchestration is typically achieved through containerization using tools like Docker and Kubernetes.

ML orchestrators Flyte and Kubeflow Pipelines also support infrastructure orchestration.

Combining ML and Data Orchestration

The ML development cycle needs to handle changes on the fly. As Union.ai CEO Ketan Umare observes, “What sets machine learning apart from traditional software is that ML products must adapt rapidly in response to new demands.”

Often it’s desirable to combine the two when building an application that’s orchestrated at the data and ML level. While Flyte is a suitable orchestrator for both, some companies use both a data and an ML orchestrator. (Consider Lyft, which ended up implementing a combination of Airflow and Flyte)

“Airflow is better suited for [data pipelining], where we orchestrate computations performed on external systems,” Constantine Slisenka, a senior software engineer at Lyft, mentions in an article titled “Orchestrating Data Pipelines at Lyft: comparing Flyte and Airflow,” which lists points to consider when choosing one or more orchestration tools.

Which orchestrator should you use?

This may seem like a no-brainer: Data pipelines → data orchestrator, and ML pipelines → ML orchestrator. In fact, several tools claim to orchestrate both data and ML, and even choosing among data and ML orchestrators is tricky.

Here’s a checklist to help you find the right orchestrator for your use case:

- How scalable should your pipelines be? Can you tolerate sporadic scalability?

If scalability is a priority, pick tools that are infrastructure-oriented, or support containerization. - Do you need infrastructure automation?

Teams that build ML pipelines benefit from automating resource allocation and management. If this describes you, choose a platform that eliminates the need to manage infrastructure manually or supports infrastructure-as-code (IaC). - Do you need custom environments?

Custom environments segregate dependencies among the pipelines. Containerization is often the go-to process to manage these dependencies. Look for tools that provide off-the-shelf containerization. - How skilled is your team?

Consider your in-house skillset when orchestrating pipelines: There’s an inevitable tradeoff between the skillset and the orchestration requirements. Consider Kubeflow Pipelines as an example. You’d need to write YAML configuration files to get a Kubeflow deployment up and running. If Kubeflow Pipelines seems like the right platform for you, will your team be able to manage YAMLs? Is your team proficient in orchestration? If not, you may have to rethink your choice. - What’s your preferred learning curve?

There are tools that aren’t so easy to get started with but provide lots of features, and there are some that are quite easy to get started with but may not encompass the required features. Of course, there are tools that tick both checkboxes. But when weighing your choices, consider the learning curve you prefer. If you need to deploy the tool right away, the fast track will work better — but if you’re able to devote some time to understand the tool, give the other category a try. - How often do your pipelines change?

Are your pipelines static or dynamic? If dynamic, does your tool support that? - Is there a specific integration that your team needs?

Some ML teams depend on a specific integration, such as Spark. If you are in that category, ensure the orchestrator actively supports the integration. - Would using an ML orchestrator be overkill?

ML orchestrators support at least some ML functions. If you just want to orchestrate data pipelines, would using an ML orchestrator be excessive? Probably not if your data pipelines are resource intensive and you need some visualization. If your data pipelines are simple and you need a scheduler to run your pipelines on a regular cadence, a data orchestrator should suffice.

Every orchestration platform has its pros and cons — some work best for data, some are best-suited for ML, while some are good for both use cases. Also, it might very well be that your orchestration needs are not satisfied with a single orchestration tool. Consider your particular use case to choose your orchestration tool(s) wisely.