Resources

Where ideas, innovation, and community come together.

Thank you! Your submission has been received!

Oops! Something went wrong while submitting the form.

Sort by date

There are no available posts matching the current filters.

Reset AllThank you! Your submission has been received!

Oops! Something went wrong while submitting the form.

How Dragonfly scales agentic research across 250k products

AI

Agentic AI

Inference

Woven by Toyota saves millions and scales autonomous driving with Union.ai

Autonomous Systems

Data Processing

Model Training

Inference

Rezo accelerates drug discovery while saving >90% on compute costs with Union.ai

Biotech & Healthcare

Inference

Data Processing

Artera scales personalized cancer therapy with Union.ai

Biotech & Healthcare

Inference

Data Processing

Model Training

Porch accelerates data and ML operations by migrating from Airflow to Union.ai

Financial Services & Fintech

Data Processing

Model Training

There are no available posts matching the current filters.

Reset AllThank you! Your submission has been received!

Oops! Something went wrong while submitting the form.

How to Build Self-Healing Agents

Union.ai

Flyte

AI

Agentic AI

We Compared the Data Models of Every Major AI Orchestrator

Union.ai

Flyte

Data Processing

Training & Finetuning

Inference

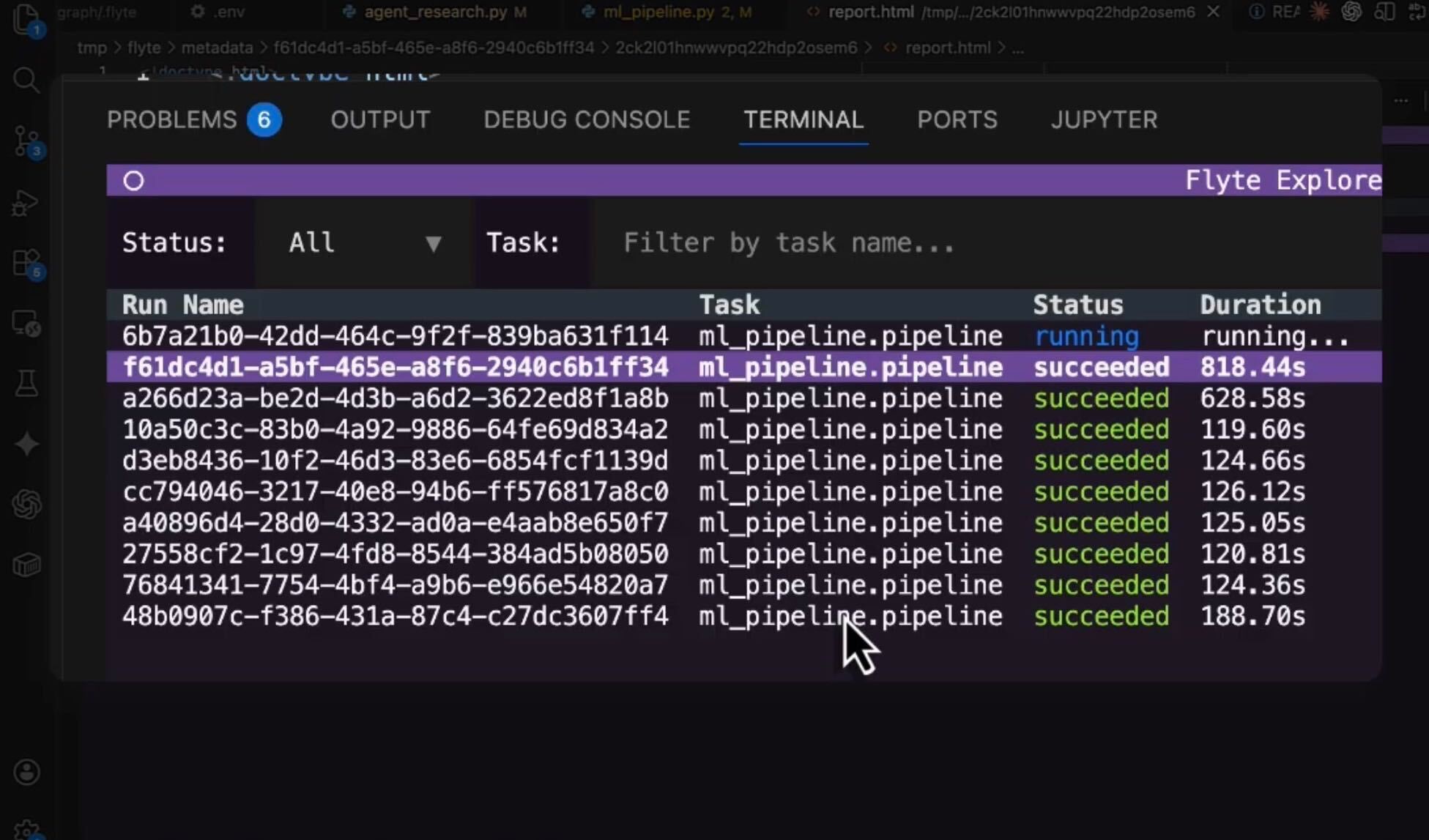

Flyte 2 OSS: Now Available for Local Execution

Union.ai

Flyte

Data Processing

Training & Finetuning

Inference

There are no available posts matching the current filters.

Reset All