AI orchestration shouldn’t slow you down where it matters most.

Union.ai, the enterprise Flyte platform, is AI orchestration built for fast iteration. Go from local dev to production in minutes, not hours.

For AI/ML, iteration speed is what defines winners and losers

The teams winning in AI/ML can try something, see if it works, and try the next thing faster than everyone else.

That loop — write, test, deploy, debug, repeat — is where AI products are actually built. And your orchestration layer sits at the center of it. It determines:

- How fast you can experiment. Can you run a workflow locally before pushing to production, or does every change require a cloud deployment?

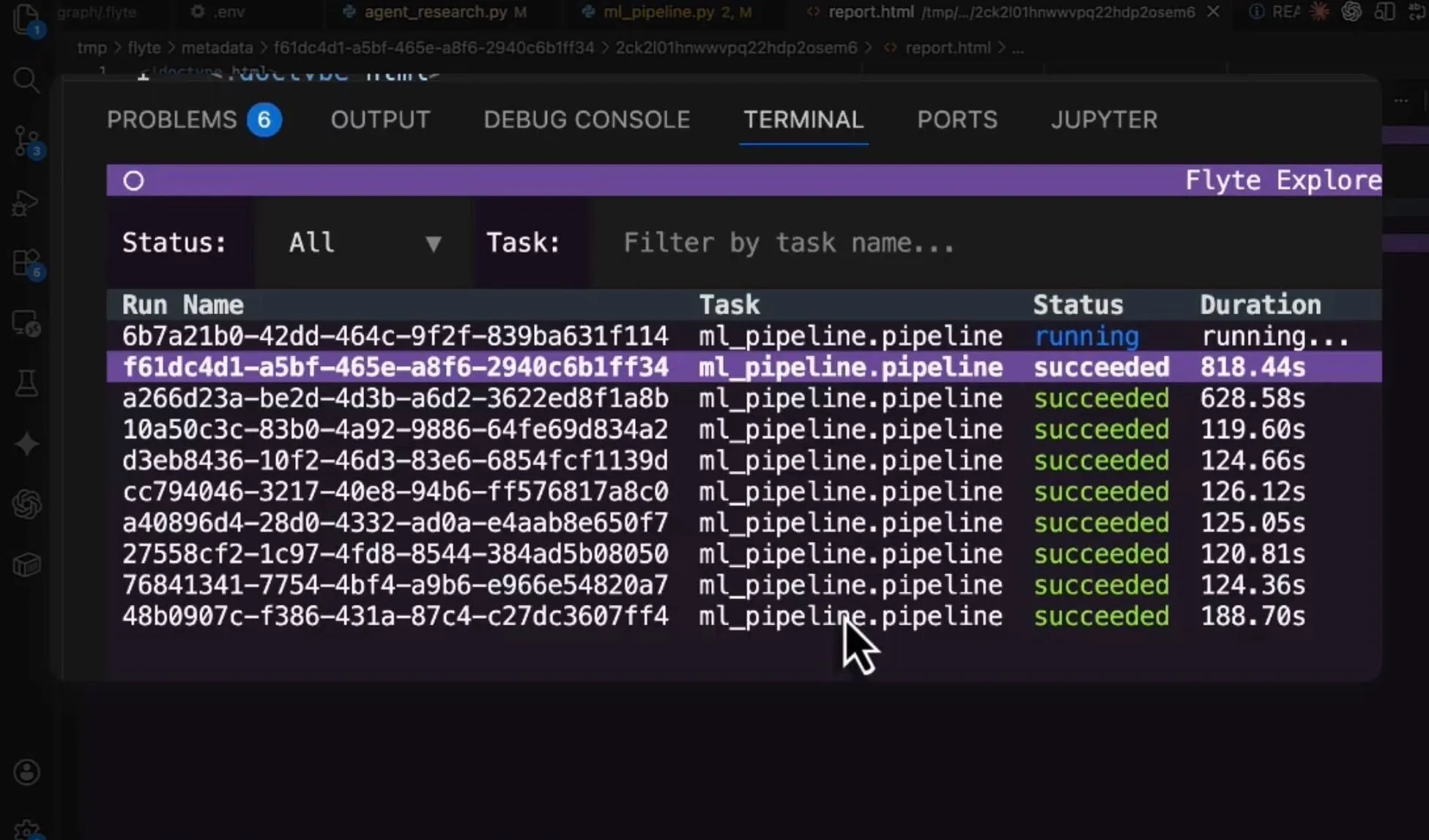

- How clearly you can debug. When something fails, can you find the problem in minutes, or are you hunting across multiple surfaces for an hour?

- How confidently you can ship. Does your system catch data mismatches and type errors before runtime, or do they surface three steps deep?

When your orchestration layer is fast and tight, your team compounds improvements daily. When it's slow and clunky, every experiment costs more time than it should.

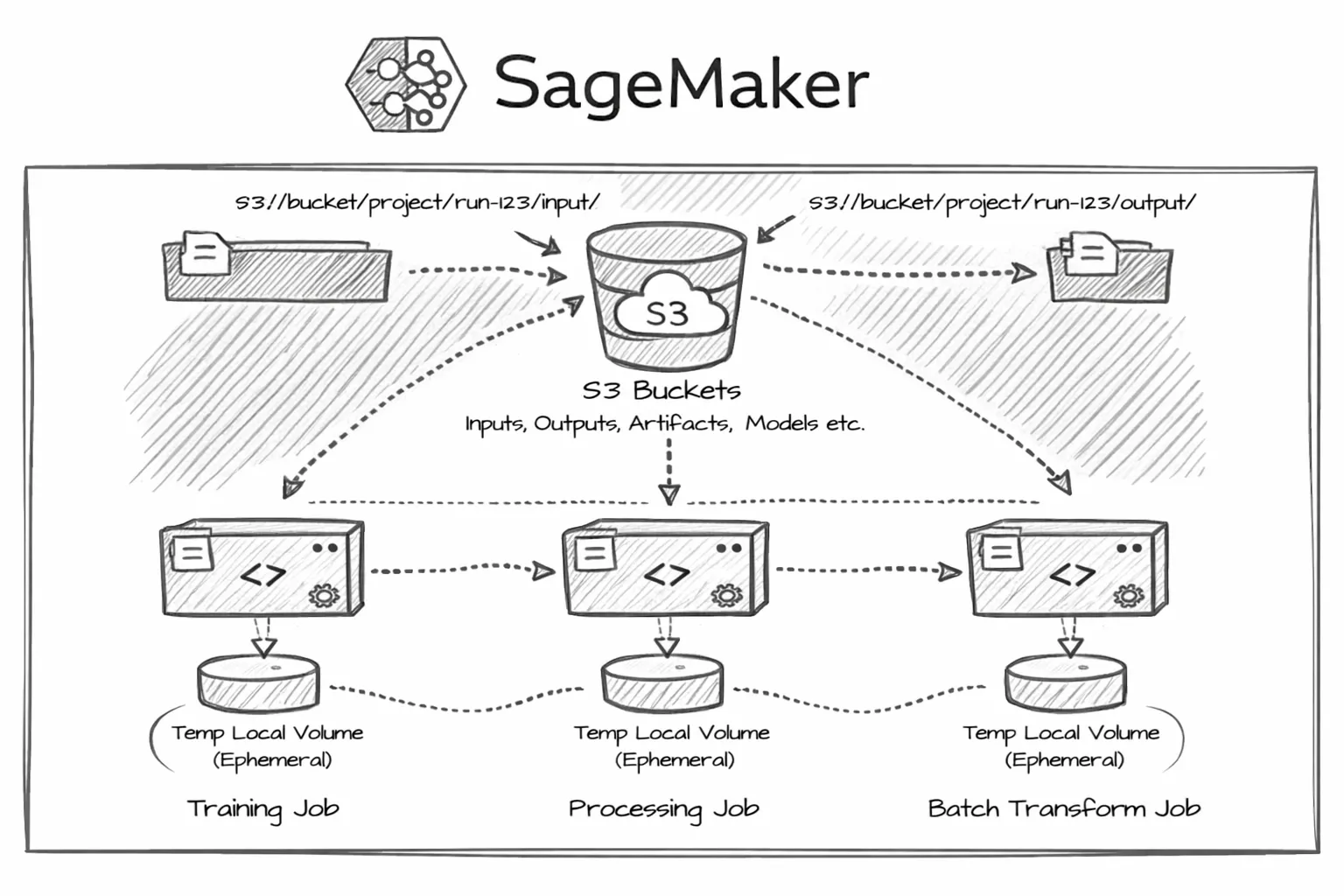

SageMaker is too slow and clunky for modern AI/ML

SageMaker is a sprawling ML platform that creates friction for AI/ML teams:

- No local development. You can't run SageMaker pipelines on your laptop. Every change requires pushing to SageMaker's cloud environment and waiting for provisioning.

- Verbose, config-heavy pipelines. Writing a SageMaker pipeline feels more like wiring up AWS service calls than writing Python.

- Fragmented debugging. Logs in CloudWatch, metadata in the SageMaker console, artifacts in S3. When a pipeline fails, you're jumping between AWS surfaces to piece together what happened.

- Rigid execution. Dynamic branching, where the workflow adapts at runtime based on intermediate results, is limited.

- No type safety across tasks. Passing data between steps means managing S3 paths and serialization yourself. Errors surface deep in execution instead of at definition time.

Union.ai is built for fast, developer-loved iteration

Union.ai, the enterprise Flyte platform, is expressly designed for fast iteration and developer happiness:

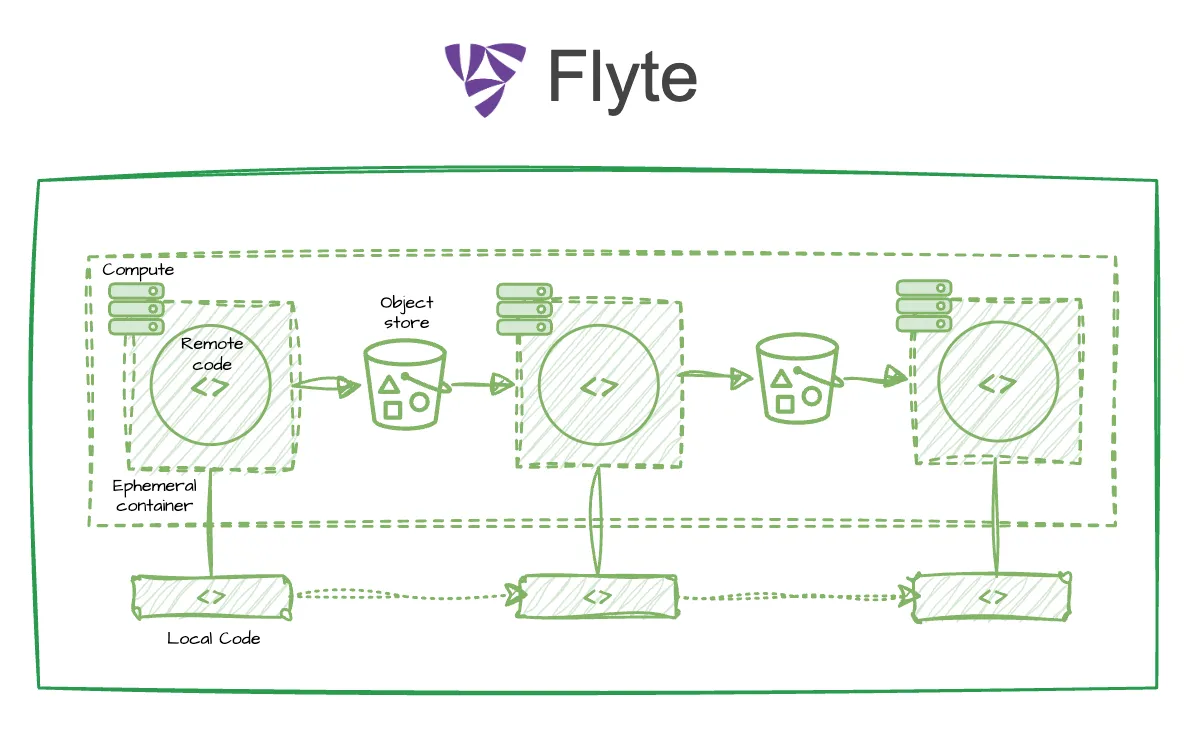

- Local to production in seconds. Write and test workflows on your laptop in pure Python, then deploy to your cloud.

- Self-healing, so pipelines that fail autonomously recover and continue

- Dynamic, so your AI systems and agents can make decisions on the fly at runtime

- Compute-aware, operating in your cloud and auto-scaling to optimize usage

- Scalable and efficient, handling large task fanout and parallelism with ease

Union.ai is built for production

The platform deploys to your secure cloud

- Enhanced scale and performance, with significantly improved actions/run, concurrency, and task startup time

- End-to-end AI lifecycle support, including orchestration, training and fine-tuning, and inference

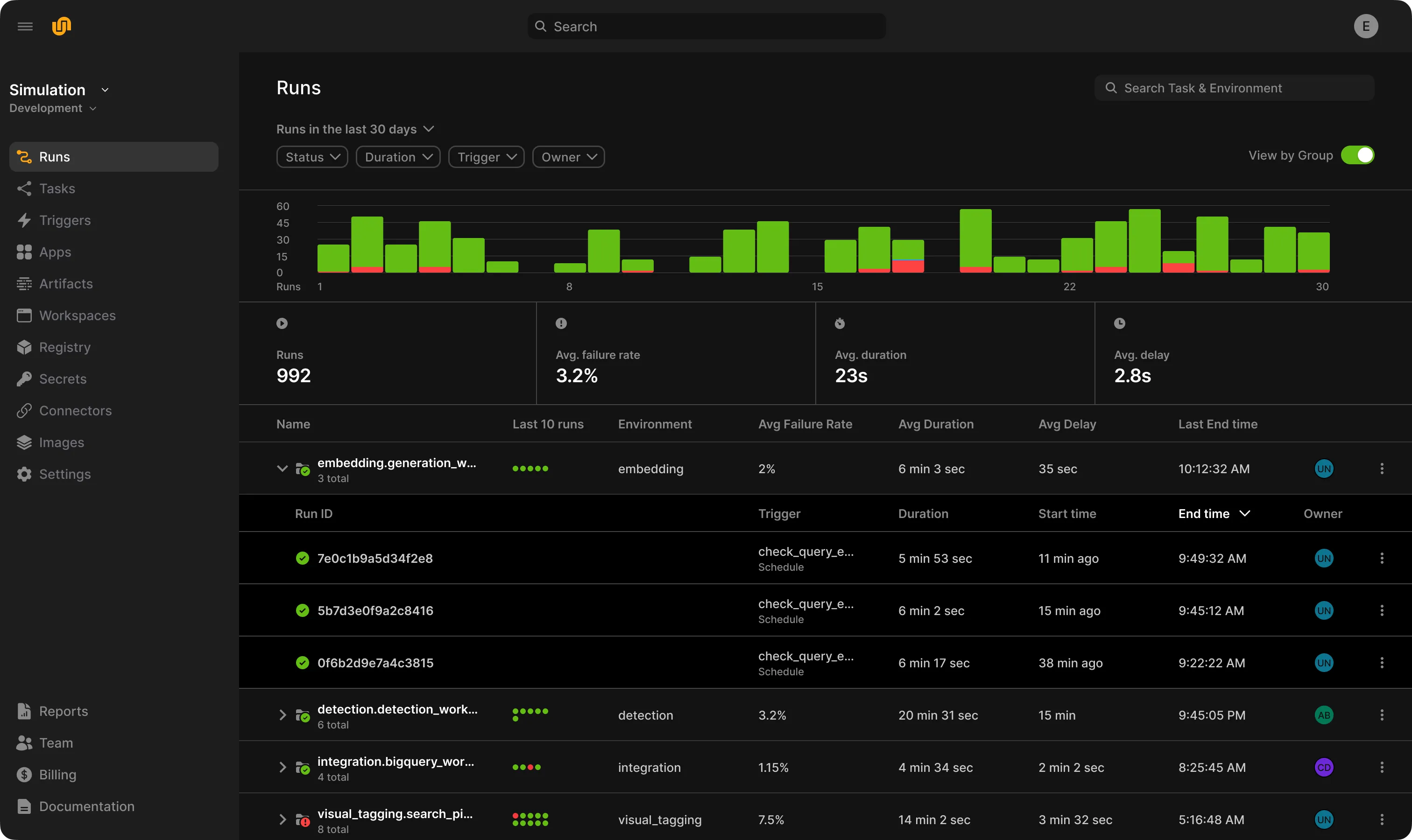

- Developer-loved UI, for faster, easier development cycles

- Observability, including for data lineage, resource usage, failure logs, etc.

- Portability to open-source, for teams looking to avoid lock-in

Teams report that Union.ai accelerates them from prototype to production, cutting iteration cycle time in half.

The Union.ai team offers high-touch support to ensure users are successful.

Flyte 2 OSS: Open-source AI orchestration

Flyte 2 OSS is the most powerful open-source AI orchestrator, bringing Flyte’s core data model, scalability, and reliability to DIY teams. While it lacks some enterprise capabilities of Union.ai, it remains the most capable open-source AI orchestration tool available. It’s trusted by teams worldwide with 80M+ downloads and growing.

Trusted by 4,000+ companies

Accelerate engineers with tools to make their lives easier.

Let’s chat

What’s a quick chat compared to the hours a week you could save on maintaining infrastructure?